|

Getting your Trinity Audio player ready...

|

Extracting maximum value from an AI cluster requires an uncompromising, end-to-end system-level optimization journey. While this does not mean it cannot be done quickly, it involves a process that must start deep within the individual compute server and extend seamlessly across the entire network fabric. To achieve industry leading AI inference performance, you cannot afford bottlenecks at any layer.

In this blog post, we share our hands-on experience optimizing an AI cluster built on AMD Instinct MI355X GPUs. We walk through how we first validated the optimal host configuration. We then explore how we tuned network parameters to ensure that the entire system runs smoothly and delivers industry-leading results. Our goal is also to contribute practical, repeatable optimization insights that can help advance industry understanding of AMD-based AI clusters.

Single-node optimization: building the right foundation

Before successfully scaling out a massive AI cluster, the foundation must be flawless. Scaling a poorly optimized single node only magnifies its inefficiencies across the entire network. For this reason, our optimization journey started at the host level.

The DriveNets Infrastructure Services (DIS) team applied a structured set of base optimizations to each compute node to create a highly tuned single-node environment. This included identifying the precise combination of drivers, operating system parameters, GPU firmware, and BIOS configurations to ensure that the hardware, operating system, and computing environments were in perfect harmony. All this was done well before we began routing traffic across the switches.

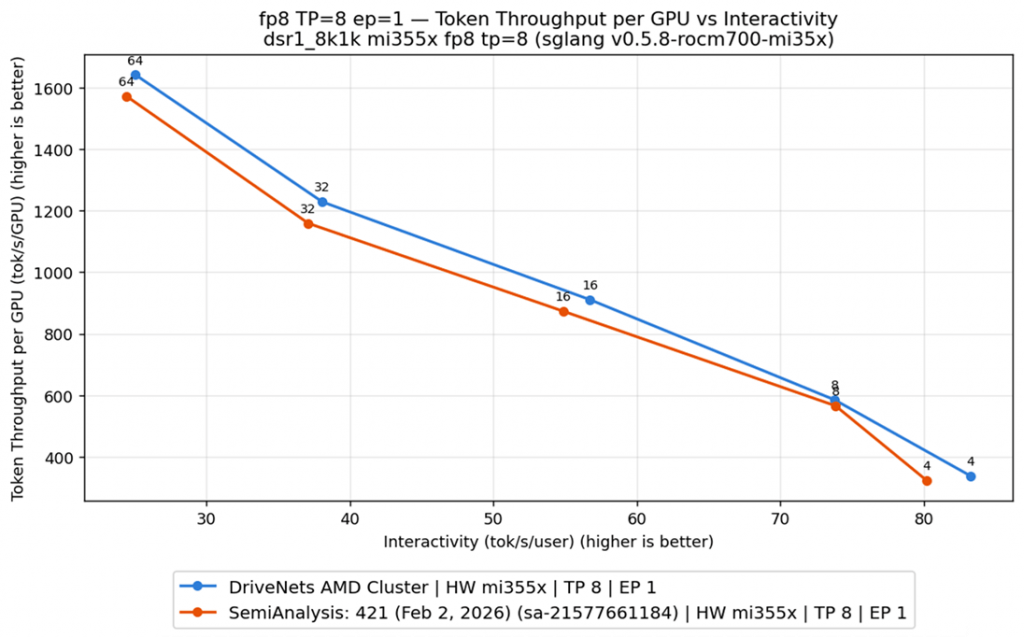

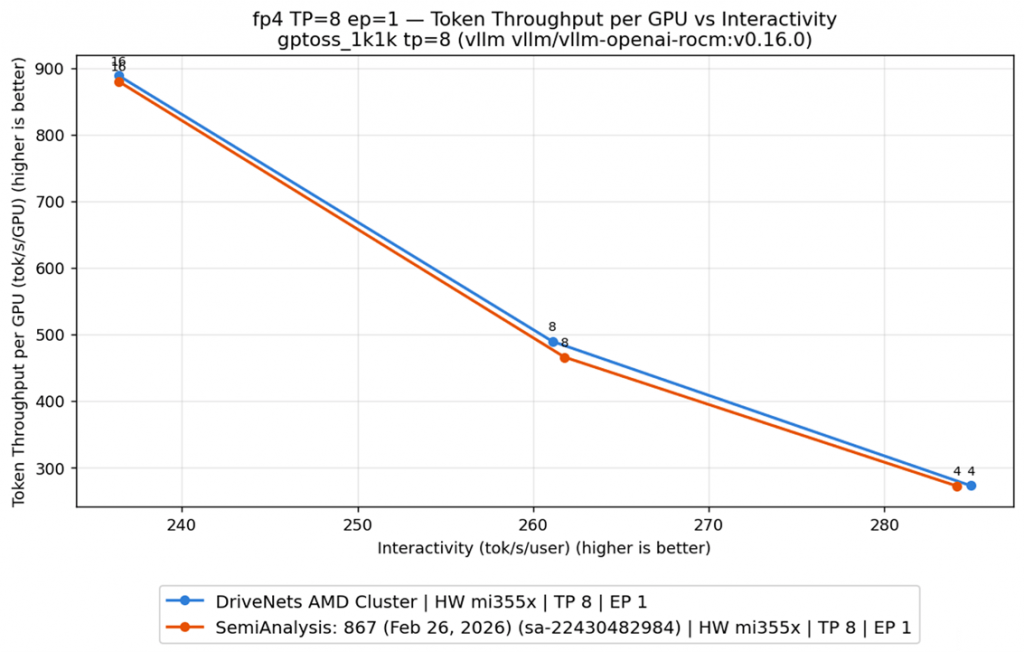

As seen in the graphics below, this host-level tuning alone delivered measurable results:

DeepSeek-R1 InferenceX results

Latency vs efficiency curve (throughput per GPU and interactivity.)

GPT-OSS-120B (FP4) InferenceX results

Latency vs efficiency curve (throughput per GPU and interactivity.)

When evaluating single-node inference tests, the DriveNets optimized AMD cluster achieved improved total throughput and faster time to first token (TTFT) relative to industry-standard reference results from InferenceX benchmark suite. We use InferenceX here strictly as a standardized baseline for apples-to-apples comparison, and to help the broader community anchor results on a common reference point.

For example, when running the GPT-OSS-120B benchmark, our optimized host-level configuration achieved higher throughput per GPU across the measured operating points, while also delivering stronger interactivity. Interactivity is a critical metric for user experience, as it reflects how responsive and efficient an AI application feels to the end user under load.

By optimizing the host configuration, we made sure performance was optimal before looking at the network aspect. Furthermore, our tests extended beyond a single model, as we incorporated tests for heavy, diverse workloads like GPT-OSS-120B (FP4 Format) and Z-Image-Turbo for diffusion models. This ensured that our host-level performance can apply universally across different types of demanding AI models. Just as importantly, we are sharing our results and insights so others tuning AMD-based systems can reduce trial-and-error and deliver stable, high-performance workloads.

Optimization phase two: multi-node performance

Once the host level is perfected, the true challenge of modern AI infrastructure begins: the network. Scaling AI inference across multiple nodes introduces immense networking complexity. This separation of prefill and decode tasks requires relentless, high-bandwidth, and ultra-low-latency communication between nodes.

To achieve optimal multi-node performance, we shifted our focus to deep network tuning. We carefully tuned key network parameters, adjusted congestion control mechanisms, and measured how each switch setting impacted the workload.

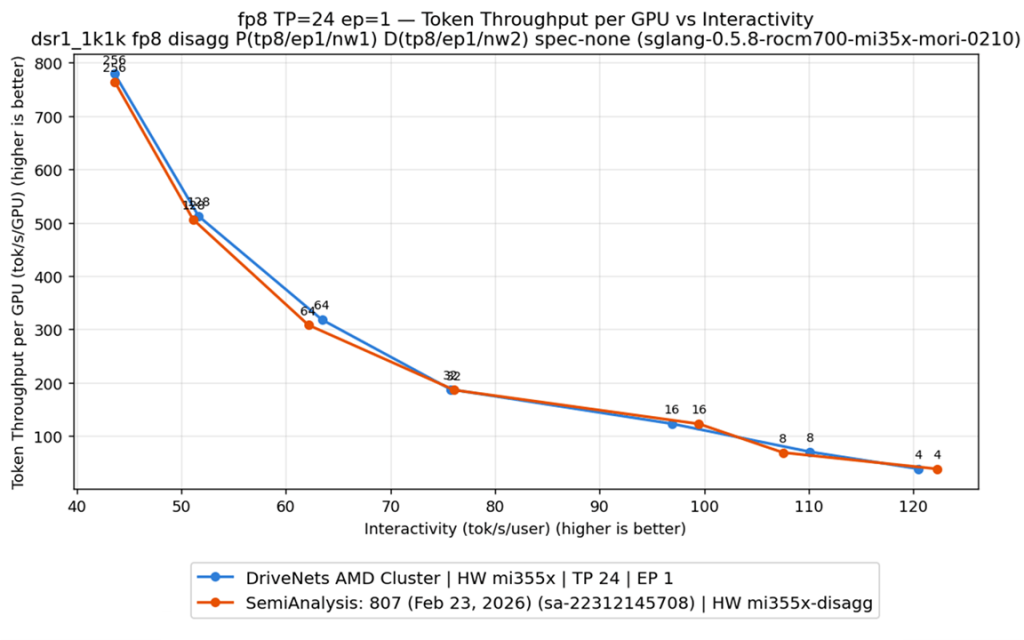

The results of this network optimization can be seen in the following multi-node testing data:

DeepSeek-R1 FP8 multi-node disaggregated inference 1P2D

Latency vs efficiency curve (throughput per GPU and interactivity)

To ensure complete transparency and a clean 1:1 comparison, we ran these multi-node tests using the exact same SemiAnalysis InferenceX containers and test methodology. Relative to the InferenceX reference baseline, our system-level approach delivered higher or comparable throughput per GPU across the measured operating points, while also maintaining strong interactivity under load.

What’s especially notable is that the DriveNets AI fabric improvements grew as concurrency increased from 64 and beyond. This validates the DriveNets AI fabric architecture and system-level tuning, demonstrating higher sustained throughput and stable latency under load.

Inside the DriveNets full-stack AI fabric approach

What makes this level of performance repeatable with any other architecture? The answer lies in DriveNets AI fabric’s end-to-end full-stack approach to networking. We recognize that AI workloads are different from one another, and that they evolve. This means that infrastructure optimization cannot be a one-time event, and that it should be executed faster.

DriveNets full-stack AI fabric approach

To manage this, we utilize our AI Cluster Orchestrator, which breaks deployment and tuning into a multi-staged process. It begins with the Fabric Provisioning Engine establishing a host-optimized configuration by automating and simplifying the route to the perfect driver, OS, GPU and BIOS configuration. We then validate this host performance using our RDMA Runner to ensure configurations are aligned with our performance goals. Only then do we move to multi-node networking with our integrated Benchmarking Engine. This engine allows us to automatically tune AI fabric elements, such as switches and NICs, and more quickly adjust network and congestion settings, while comparing performance with different AI inference workloads.

Our AI Cluster Orchestrator continuously tracks historical industry knowledge. This enables us to quickly evaluate the precise impact of network adjustments, switching parameters, and node allocations on disaggregated prefill and decode performance.

Since AI is moving faster than any technology we have ever seen, we are pushing our AI fabric tools to align with the latest available information. For example, the results detailed above were not run on outdated, stable-release software from months ago, rather, they were executed using the latest SemiAnalysis InferenceX benchmark containers, utilizing software containers that were only days old. By testing and validating against the latest AI developments, we ensure that our AI infrastructure performance is always up-to-date and continuously capable of tracking and, in multiple tested scenarios, exceeding published benchmark reference results.

True AI performance requires an end-to-end system optimization strategy

The data above validates what we want to share with the industry: maximum AI cluster performance comes from end-to-end optimization. True AI performance requires an end-to-end system optimization strategy. It begins with the base configuration of a single host, ensuring the compute node is perfectly tuned to process data as efficiently as possible. Only then do we move to network fabric tuning, ensuring that multi-node clusters can handle growing concurrency without buckling under the pressure.

By following this approach, DriveNets helps raise the performance bar for AMD-based AI workloads through an end-to-end optimization approach. By publishing our methodology and measured results, we aim to help the industry get more from AMD-based AI clusters.

Key Takeaways

- AI performance requires end-to-end system optimization — not just GPUs

Simply deploying hardware is not enough; maximum performance demands coordinated tuning from single-node configuration through full network fabric. - Single-node host tuning delivers major gains

Optimizing drivers, OS parameters, firmware, and BIOS led to measurable improvements — including 15% faster TTFT and higher throughput versus baseline benchmarks. - Network tuning is critical for multi-node scale

Deep optimization of congestion control, switches, and fabric settings enabled up to 5% higher throughput and 12–16% faster TTFT, with performance improving at higher concurrency. - DriveNets’ full-stack AI fabric enables repeatable, benchmark-leading results

Through its AI Cluster Orchestrator and continuous benchmarking approach, DriveNets ensures infrastructure stays aligned with the latest AI workloads and consistently exceeds industry benchmarks.

Frequently Asked Questions

Why isn’t deploying GPUs enough to achieve optimal AI cluster performance?

Because AI performance depends on end-to-end system-level optimization, not just hardware installation. True performance requires careful tuning of host configurations (drivers, OS, firmware, BIOS) and network fabric settings to eliminate bottlenecks across the entire stack.

What performance improvements were achieved through host and network optimization?

Host-level tuning delivered measurable gains, including 15% faster Time to First Token (TTFT) in single-node tests. In multi-node scaling, DriveNets achieved up to 5% higher total throughput and 12–16% faster TTFT compared to the InferenceX results.

How does DriveNets ensure repeatable, high-performance AI infrastructure optimization?

DriveNets uses a full-stack AI fabric approach powered by its AI Cluster Orchestrator, which automates host configuration, validates performance, tunes network parameters, and continuously benchmarks against the latest AI workloads to maintain industry-leading results.

Related content for AI networking infrastructure

DriveNets AI Networking Solution

Latest Resources on AI Networking: Videos, White Papers, etc

White Paper

Scaling AI Clusters Across Multi-Site Deployments