Blog

AI workloads have moved beyond experimentation into deep production environments. Today, performance is no longer about peak FLOPS (floating-point operations...

Read more

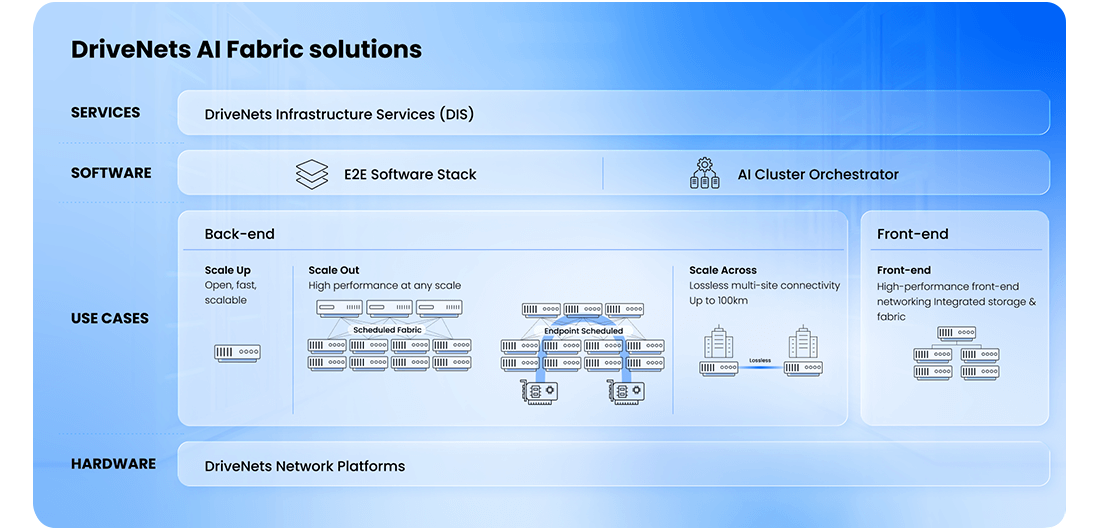

Highest-performance full-stack networking for

AI Infrastructure - hardware, software, orchestration and deployment/optimization services

AMD and DriveNets released a validated reference architecture document for clusters built with AMD Instinct MI355X GPUs, AMD Pollara NICs, and DriveNets scale-out and frontend solution.

The reference architecture document provides a comprehensive end-to-end blueprint for building a high-performance, scalable AI GPU cluster, and a repeatable deployment model that reduces integration and configuration risk.

|

|

|

||||||||||||||||||||||

| Hardware Specifications | |||||||||||||||||||||||

|

|||||||||||||||||||||||

|

|

|

||||||||||||||||||||

| Hardware Specifications | |||||||||||||||||||||

|

|||||||||||||||||||||

|

|

|

||||||||||||||||||||

| Hardware Specifications | |||||||||||||||||||||

|

|||||||||||||||||||||

|

|

|

||||||||||||||||||||||||||

| Hardware Specifications | |||||||||||||||||||||||||||

|

|||||||||||||||||||||||||||

|

|

|

||||||||||||||||||||||||

| Hardware Specifications | |||||||||||||||||||||||||

|

|||||||||||||||||||||||||

DriveNets AI Fabric includes a solution for any part of the networking fabric, including:

DriveNets provides an end-to-end software stack, including:

DNOS: Network operating-system that runs on multiple hardware options

AI Cluster Orchestrator: a lifecycle orchestration system with engines tailored for:

End-to-end professional services for any GPU fabric deployment, including:

Bring up services

Software services

Blog

AI workloads have moved beyond experimentation into deep production environments. Today, performance is no longer about peak FLOPS (floating-point operations...

Read moreWhite Papers

This paper outlines the networking challenges and key considerations for expanding AI clusters across geographically distributed sites....

Read moreBlog

If you have built an AI cluster, you already know it is not a simple task. It is not just...

Read more