|

Getting your Trinity Audio player ready...

|

But the rise of mixture-of-experts (MoE) architectures has fundamentally shifted that equation. MoE dramatically reduces the compute needed per token, and in doing so, exposes a new bottleneck that no amount of GPU investment can fix: the network that connects those GPUs.

MoE generates unpredictable cross-node data transfers that the network must handle

Death of the Plannable Network

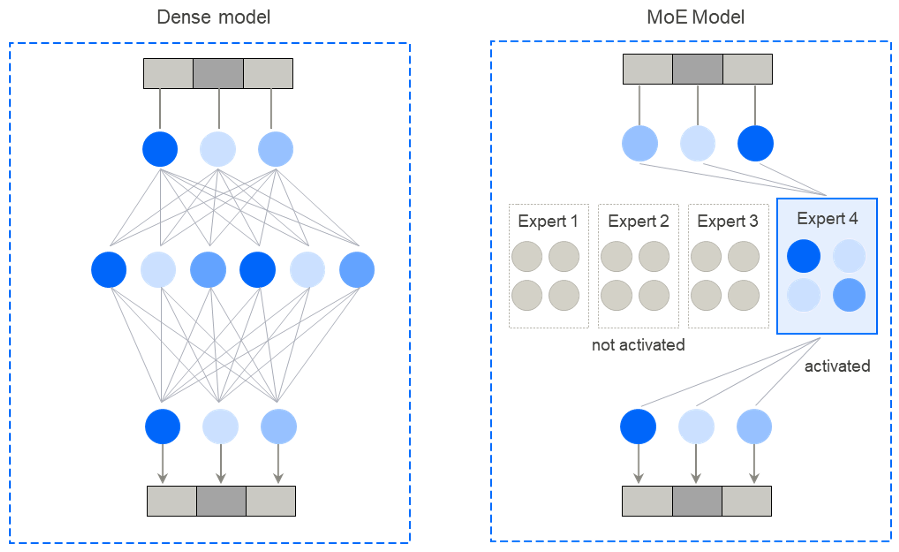

In a traditional dense model, every token activates every parameter in the network. A 400-billion-parameter model means every word typed triggers 400 billion calculations. The compute cost is enormous.

But there is a hidden upside: predictability. We do not know in advance the outcome of each computation, but we do know exactly which GPUs will exchange information, when, and how much. The communication pattern is fixed by the architecture itself, before a single calculation runs. This allows engineers to build and optimize the network around a known, stable blueprint. The mechanism for this communication is a set of structured collective operations, such as All-Reduce, All-Gather, and Reduce-Scatter. Because the network behaves predictably, these operations can be tuned to near-maximum efficiency. Like dancers performing a choreographed routine, every GPU knows its position and its next move before the music even starts.

Dense models delivered remarkable results, but they came with a heavy cost. Running every token through every parameter is expensive, and scaling out across more nodes only makes it worse. More nodes meant more communication, and keeping everything in sync was no small task. The further you scaled, the higher the bill, with no obvious way out. That is, until one insight changed everything.

MoE changed how workloads scale and perform

One question challenged the most basic assumption underlying dense models: does every token really need to activate every parameter? The answer, it turned out, was no. That question gave birth to the Mixture-of-Experts architecture, a concept that has reshaped how the industry thinks about scaling. Rather than one generalist neural network handling every token at full weight, MoE introduces a set of small, specialized neural networks and a lightweight router that decides, for each token, which subset of experts to activate.

The gains are significant on two fronts:

- Capacity: MoE models store far more knowledge than a dense model of the same size. A trillion-parameter model has absorbed vastly more during training, and the routing mechanism makes that knowledge accessible at inference time without activating all of it on every token.

- Efficiency: because only a small fraction of the neural network activates per token, compute costs drop dramatically. A model like Kimi K2 has roughly a trillion parameters in total but activates only around 32 billion per token, yet matches or outperforms dense models that activate all 72B+ of their parameters.

Apple researchers confirmed this: MoE models consistently outperform dense models of equivalent active-parameter count on MMLU and reasoning benchmarks, even when the test was set up to favor dense. Less compute per token, better results.

Yet the impact of MoE goes beyond smarter, leaner computation. It changes the nature of the scaling constraint entirely. Because experts are relatively small, specialized components, an MoE model can be distributed across multiple nodes efficiently, with comparatively small messages traveling between them. The barrier is no longer fitting the entire model on a single machine. For algorithm teams, this is a game-changer. Model designs that were once theoretically brilliant but practically unrunnable may now be viable.

These benefits, however, come with a new demand on the network. Where dense models communicate like a choreographed group, MoE is more like a group of improvisers:

- Unpredictable traffic: each token follows a different route, determined only at runtime by the input itself. Traffic patterns shift with every batch, and the load across GPUs can be highly uneven.

- Hotspots: many tokens may be routed to the same expert simultaneously, creating hotspots on some GPUs while other GPUs sit underutilized.

- Cross-node complexity: huge MoE models are designed to span multiple nodes, adding cross-node communication as an entirely new layer of complexity to manage.

This shift puts networking at the center. As MoE cuts compute costs per token, the network’s share of the total cost grows. An idle GPU waiting on a network response is wasted investment. The question is no longer how much compute you have, but how well you connect it.

Universal Performance Ceiling

This isn’t just a theoretical worry. Every major AI lab, regardless of whether it uses Nvidia, AMD, or Google TPUs, has identified networking as a critical area of investment:

- DeepSeek reported a roughly 1:1 compute-to-communication ratio during V3 training, meaning GPUs spent about as much time on all-to-all communication as on useful compute. The problem was severe enough that the company developed DualPipe, a new pipeline parallelism algorithm that hides network overhead by allowing computation and communication to proceed simultaneously rather than sequentially.

- Meta also found that expert-routing all-to-all traffic accounted for 10-30% of per-token latency in production MoE serving. They addressed the problem from both ends: by reducing the communication overhead of expert parallelism, including more efficient handling of all-to-all traffic, and by adopting a fundamentally different network architecture (scheduled fabric) to move what remains without congestion.

- Google has already indicated that distributed AI clusters become network-bound even before adding MoE into the mix, as scaling efficiency declines when workloads move from TPU interconnects onto the datacenter network in massive clusters. Its solution was to keep expanding the boundary of “inside the fast interconnect” so that more workloads never have to touch the traditional network at all, using OCS (optical circuit switching) architecture.

The pattern is clear: networking is the main bottleneck. Teams achieving the best results are no longer those with the most powerful compute, but those with the deepest understanding of their network constraints and the architectural creativity to work around them. With each new generation of faster chips, the gap between compute speed and network speed continues to widen.

DriveNets Approach

Getting the most out of an AI cluster now requires more than just raw compute. It requires the right network architecture and the ability to optimize the communication stack around it. While companies such as DeepSeek, Google, and Meta built those capabilities internally, most organizations do not have the specialized networking expertise needed to do so.

DriveNets brings that expertise from more than a decade of building large-scale networking solutions. Its network operating system runs on thousands of switching platforms globally, handling petabytes of daily traffic in some of the world’s largest networks.

Today, DriveNets applies that experience to AI infrastructure, enabling customers to get more performance from multi-GPU clusters built on Nvidia and AMD Instinct accelerators. Over the past few years, DriveNets has invested heavily in AI fabrics, building a full-stack networking solution designed to close the gap between peak GPU capability and real-world GPU utilization.

This includes both architectural and software optimization. Fabric-Scheduled Ethernet (FSE) and Endpoint-Scheduled Ethernet (ESE) give customers the flexibility to choose the network design that best fits their scale, workload, and budget. Moreover, DriveNets’ experience with Nvidia AI workloads and its close collaboration with AMD enable deeper communication-stack optimization, including RCCL tuning for both single-node and multi-node environments.

It’s All About the Network

Networking has long been a key scaling bottleneck in large AI clusters, and the shift toward MoE architectures has increased network pressure even further. For organizations building AI infrastructure on Nvidia or AMD, the opportunity is clear. The compute is there. The models are there. What now determines whether that investment delivers its full potential is how well the network is understood, designed, and optimized.

Key Takeaways

- The Bottleneck has Shifted from Compute to Network

The AI industry has long focused on raw processing power as the primary driver of performance. However, MoE architectures reduce the compute needed per token, which in turn exposes a new bottleneck: the network connecting the GPUs. As compute costs drop, the network’s share of the total system cost and performance impact grows. - MoE Replaces “Choreography” with “Improvisation”

Dense models operate with high predictability, following a “choreographed” communication routine where GPU data exchange is fixed by the architecture. In contrast, MoE models are “improvisational”; traffic patterns are determined at runtime by the input itself. This unpredictability leads to uneven GPU loads and data “hotspots” that traditional networks struggle to manage. - Communication Latency is Reaching a 1:1 Ratio with Compute

The networking challenge is no longer theoretical; major AI labs are seeing massive overhead. For example, DeepSeek reported a 1:1 compute-to-communication ratio during training, meaning GPUs spent as much time “talking” as they did “thinking”. Similarly, Meta found that expert-routing traffic can account for up to 30% of latency in production MoE serving. - Standard Datacenter Networks are the New Performance Ceiling

Even before MoE, massive clusters were becoming network-bound when workloads moved from fast interconnects to traditional datacenter networks. To achieve peak performance, teams must now look beyond standard Ethernet and adopt advanced solutions like optical circuit switching (OCS) or scheduled fabrics to prevent congestion.

Frequently Asked Questions

Why is networking now the primary bottleneck for Mixture-of-Experts (MoE) architectures?

Networking becomes the primary bottleneck because MoE models generate unpredictable, cross-node data transfers determined at runtime by input tokens. Unlike the fixed communication patterns of dense models, MoE traffic is stochastic, creating GPU hotspots and uneven loads. This shift makes interconnect performance more critical than raw GPU compute for scaling.

What impact does communication overhead have on the performance of MoE models?

Communication overhead significantly impacts latency, with expert-routing traffic accounting for 10-30% of per-token latency in production environments. High-performance models like DeepSeek V3 report a 1:1 compute-to-communication ratio, meaning GPUs spend as much time on data transfer as on actual processing. Reducing this overhead requires advanced pipeline parallelism or scheduled network fabrics.

How do Mixture-of-Experts (MoE) models achieve greater efficiency than dense models?

MoE models achieve efficiency by activating only a small subset of specialized “expert” parameters for each token rather than the entire network. For example, the Kimi K2 model contains one trillion total parameters but only activates 32 billion per token. This approach matches the performance of larger dense models while dramatically reducing the compute cost per token.

Related content for AI networking infrastructure

DriveNets AI Networking Solution

Latest Resources on AI Networking: Videos, White Papers, etc

White Paper

Scaling AI Clusters Across Multi-Site Deployments