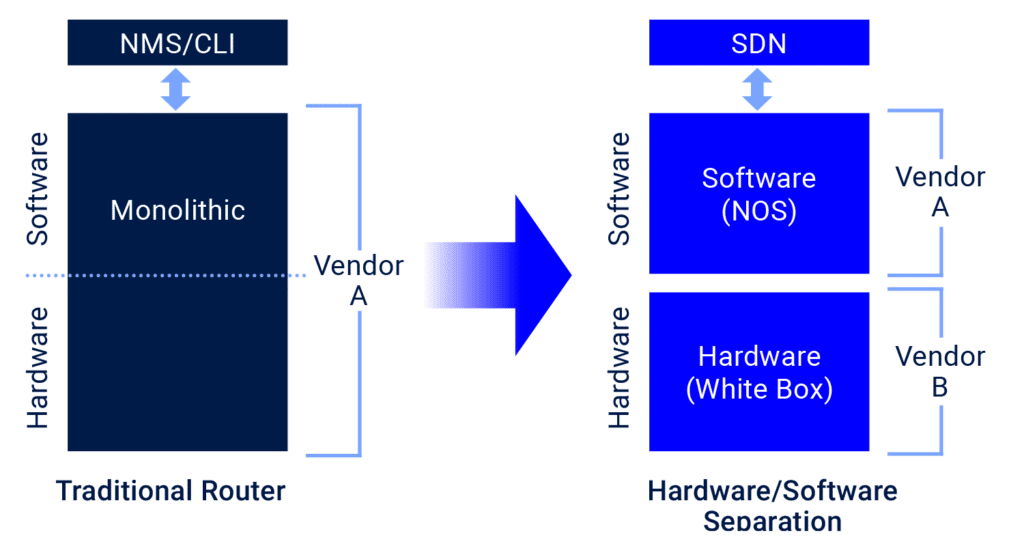

Step 1: Disaggregation (of software and hardware)

This is the simplest step, as it is one of the oldest tricks in the book. Vertical disaggregation (or hardware-software disaggregation) makes the networking challenge a software-based one. Using commercial off-the-shelf networking hardware, aka white boxes, the hardware part of the solution is simplified into common building blocks from multiple vendors that cost less and perform better. This makes the cost structure of the solution one that adheres to the operator’s budgetary constraints, adding levels of flexibility and scalability that cannot be achieved in a monolithic solution.

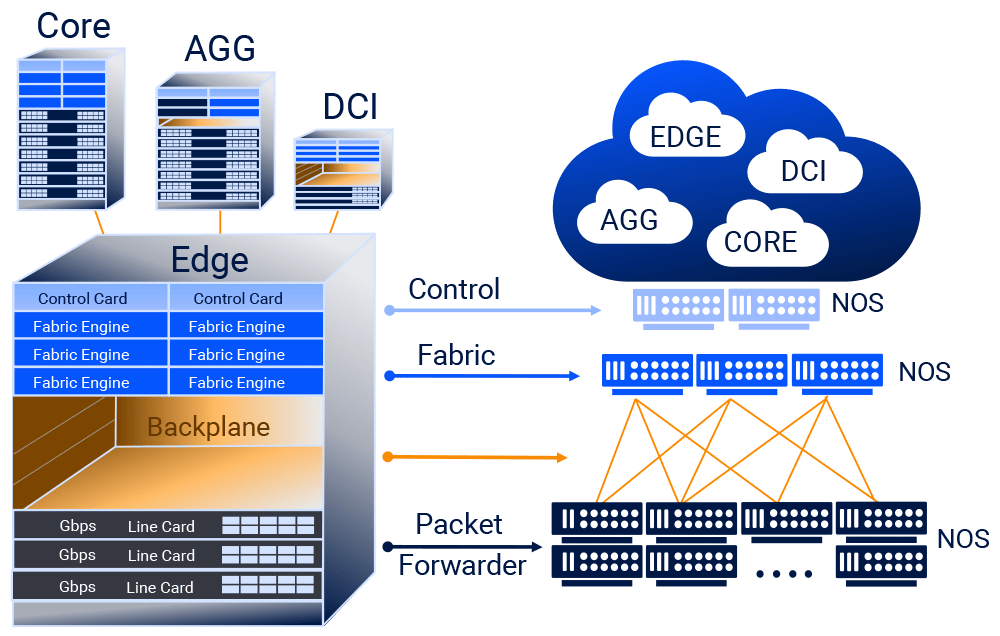

Step 2: Distribution (across multiple hardware instances)

Yet running a software-based network function (NF) over a single white box is limited – in terms of capacity, interfaces, TCAM size and other parameters – by the capabilities of that one white box. In order to make the solution scale optimally, without the need for multiple sizes and types of white boxes, we need to distribute this NF service instance (SI) across multiple boxes.

For this, we need a smart abstraction layer that can take a cluster of white boxes and abstract all the resources of those boxes into a single pool of resources. This act of distribution is done in software and allows the SI that runs above it to see the cluster of white boxes as if it was a single hardware entity. This means that it’s possible to use a couple of basic hardware building blocks to build a router of any scale by simply multiplying those building blocks into a cluster. This cluster is used as a single hardware instance, with all the resources in the boxes accessible by any software that runs on top of the abstraction layer.

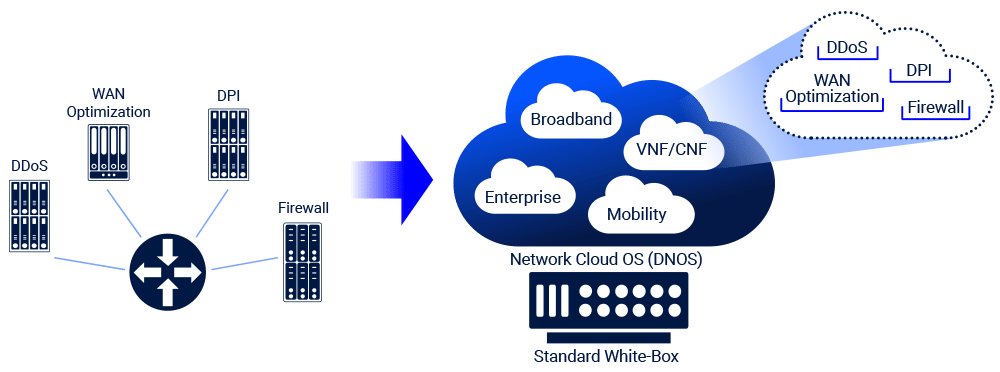

Step 3: Containerization (of multiple software instances)

Running a network function over a cluster of white boxes is great, but it’s not necessarily the optimal use for those valuable hardware resources. In order to optimally utilize those boxes, we need to run multiple services over this infrastructure, leveraging cross-service synergies in order to fully utilize the hardware resources.

In order to run multiple services over the distributed hardware pool of resources we need to run each service as a service instance in a container. This well-established concept turns the disaggregated distributed network into a cloud-native network solution – or, in other words, a network cloud. The network cloud allows multiple network functions, from one or multiple software vendors, to run over a cluster of commercial off-the-shelf white boxes, from one or multiple hardware vendors. For multiple systems operators, each network function appears as a separate entity, with dedicated hardware resources and complete separation from other service instances running on the same cluster.

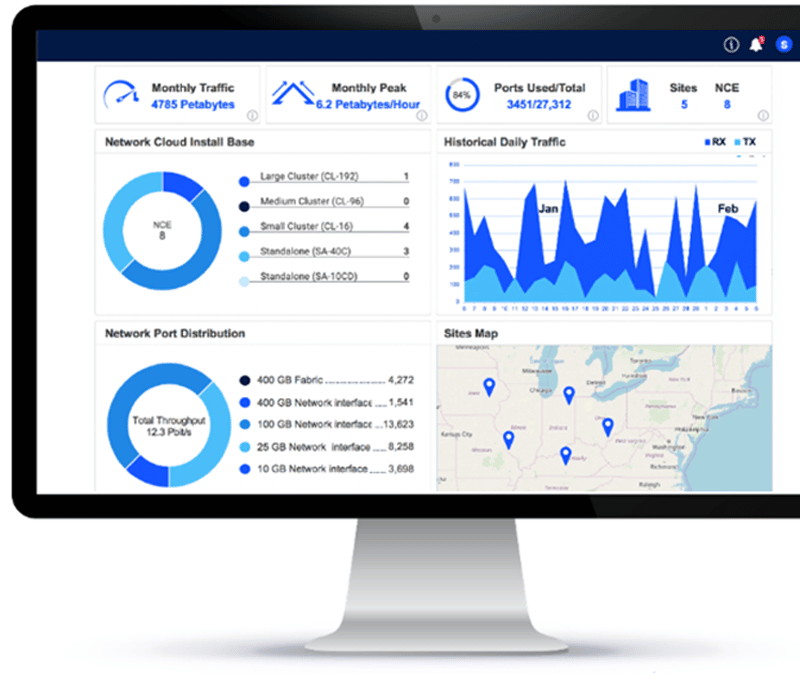

Step 4: Orchestration (of, well, everything)

So, first we have a hardware infrastructure, which is built upon a cluster of white boxes. Second, we have a software infrastructure layer that handles the distribution of software across this cluster and the abstraction of the hardware resources into a shared pool. Third, we have multiple service instances running on top of all that. It seems like a great solution that no one can use – as it sounds extremely hard to plan, manage and maintain! This is where our fourth step comes into action.

A network cloud solution must include an effective network orchestration layer that provides multiple views used by multiple network-operation and infrastructure-operation functions One view simplifies the handling of the hardware and software infrastructure instances by automating bring-into-service, upgrading and troubleshooting tasks of this layer. Another view of the orchestration system provides a monolithic-solution-like view of each NF service instance. Thus, even though the service instance runs as a container on top of multiple distributed software and hardware layers, the network function view presents this SI, with its allocated resources, in a simple-to-manage manner.

Conclusion

The DriveNets Network Cloud solution follows these four steps to the letter, translating into major benefits for telecom and cloud operators.

The first two steps, disaggregation and distribution, result in a distributed disaggregated chassis (DDC) architecture that enables lowest-cost infrastructure and optimal scaling.

The third step, containerization, allows optimal scaling and software-paced innovation as it enables the introduction and addition of new services and network functions by software only, over existing hardware infrastructure.

Lastly, the fourth step of orchestration, implemented by DriveNets Orchestrator (DNOR), allows software-paced innovation as well as cost reduction, primarily on the operations side.